Discussions

Avatar Group Status endpoint

does response include preview_url?

Avatar Model III Bad Motion.

As mentioned in our previous conversation, here are the video ids. Both are generated with model III.

Background Removal for Custom Video Avatars via API

Hi HeyGen Support Team,

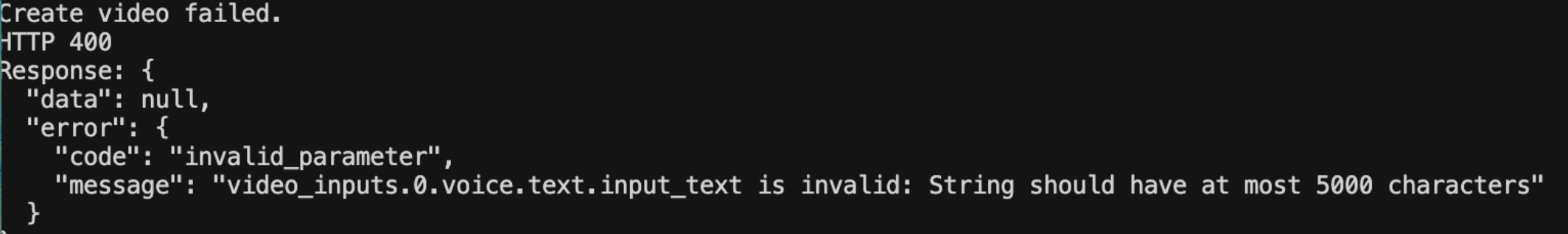

/v2/video/generate input string too long

My Personal avatar

Hi,

Group id training stuck at pending

Dear support, would you help to check group id : 228684644a8d41ab9807e32fabc07d2d, which keep showing pending status for a while , thank you

Avatar Model III Bad Motion

When I create an avatar from a photo on your website it has great movement on model III. When I do it through an API call its a video that plays for a little and then reverses, which looks bad.

AVATAR MODEL III API Parameters

Hey guys, I'm trying to achieve the same result you achieve with the model III with an API call, but I'm not struggling the avatar is moving as if it is having a stroke from time to time, what are the exact settings/parameters you use on your browser generator so I can set them up on the API call?